This is part 1 of my explorations of using deep learning for assisting the process of music composition. In this part, I look at some almost-winning output of a model trained by deep learning methods on over 23,000 folk tunes, and make improvements to produce a session-ready piece.

There have been several recent explorations of music generation using statistical models learned by “deep learning” methods:

- “Lisl’s Stis”: Recurrent Neural Networks for Folk Music Generation, in which the system is trained on 1180 tunes in ABC format from a collection published in 1778

- Irish folk music generation, in which the system is trained on 23,962 tunes in ABC format from a growing online collection

- Modeling and generating sequences of polyphonic music with the RNN-RBM, in which the systems are trained on various collections of MIDI music

- Composing music with Recurrent Neural Networks, in which the system is trained on 8-measure fragments extracted from an online collection of classical MIDI music

- Synthesizig digital audio with recurrent neural networks, in which a system is trained on digital music audio of the group Madeon. (Code available here.)

These are exciting contributions to the well-studied domain of algorithmic music composition. The prospect of developing a system that can learn to emulate characteristics of a collection of music data is one of the aims of “music metacreation“. Thus far, the most convincing demonstrations of music style emulation in my opinion are the EMI system of David Cope, and Continuator of François Pachet. Historically, the Illiac Suite for String Quartet (1956) is a monumental piece in this direction, but of an approach based on expert knowledge rather than learning from a corpus of exemplary music.

In The Infinite Irish Trad Session, we have used a recurrent neural network with long short-term memory to build a model from 23,962 traditional Irish tunes (well, a large number of the tunes are Irish). We then sample from that model to generate a new tune, and have our performance system realise it in a way imitating a real Irish trad session. So far, we have produced over 32,000 recordings, which amounts to 491 hours of audio (and a surprised administrator asking why my home directory has ballooned to over 80 GB). From this collection, a system randomly selects every 5 minutes seven recordings and then serves it as a set. To see all of the MP3 we have produced so far, look here.

After spending several hours listening to these sets (with my wife feigning incredible enthusiasm, but also surprise at how good the results can sound! Thanks honey!!), it is clear that the model learned does encapsulate important characteristics of the music style. Many of the pieces have an Irish feel, and I am surprised how many are close to being ready for a session.

For instance, here is the ABC produced by the system that it has titled “The Doutlace”:

T: Doutlace, The

M: 4/4

L: 1/8

K: Gmaj

A2 eA cAdA|BAGA BG ~G2|A2 eA cAeA|decd BA A2|A2 eA cAeA|GABG AGEG|AGEG FGAB|c2 BG AFDF:||:EAAB cABc|dBGA BdeB|cAAB cded|eAAB cedB|ecAB cAAB|cADA BAGF|EFGE FGAB|1 cABG A2 AB:|2 cABG A4||cAGA EA A2|cdef gedB| ABAG EA A2|dcde fdAG| |:cAGE FGAB|c2 cd efaa|gefa gedc|BABG FAE<G|EFGd EAGE|c2 AG EGGA|Ec ~c2 cdeg|~f3e decd||

Here is it converted to staff notation, with the audio of its realisation by our performance system.

I think this is a promising tune (it is a kind of “reel” since it is in 4/4). The system has produced two sensible melodies of 8 measures, each one repeated. (A “standard” Irish tune is two 8-bar sections, each repeated.) The first melody (the A section, or “tune”) has a nice little figure appearing in the first measure, which returns in the 3rd and 5th measures, but varied with the D raised to an E. The second melody (the B section, or “turn”) has its own little figure, which is repeated and varied. The contours of the two melodies are good. The melody of the B section spends more of its time in the higher notes than that of the A section, which is also typical. The intervals in the cadence in the fourth measure of the tune provides contrary motion to the cadence at the end of the turn. The A tone dominates throughout both sections and makes the tune and the turn sound like they belong together. The last section is odd, however, and doesn’t really fit. It feels as if the model has begun a second piece that it doesn’t finish.

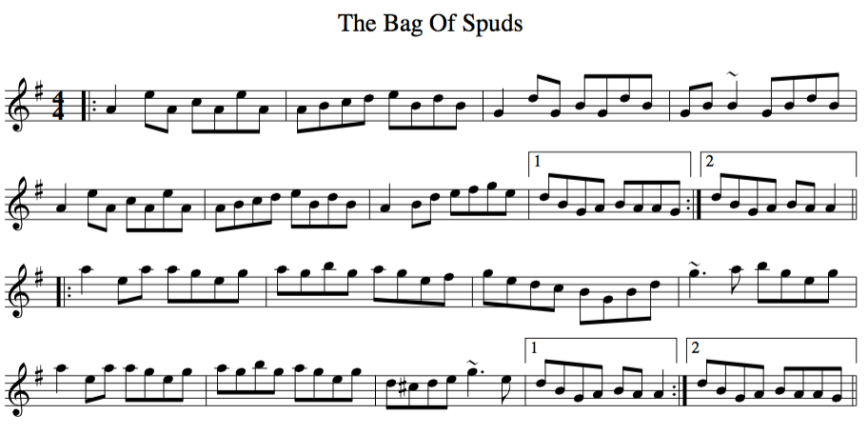

“The Doutlace” is almost too good (and I spent less than a minute to find it among the 32,000 recordings), so I wonder if the system has plagiarised. Searching for the main figure of its tune among the 23,962 ABC tunes of our collection turns up nothing (“A2\_s*e\_s*A\_s*c\_s*A\_s*d\_s*A”); however, I find its variation occurs in the tune of a reel called “The Bag of Spuds”:

It also appears in the tune of the reel “Matt Peoples'”:

and the turn of the funny reel “Clais An Adhmid”:

and the tune of the reel “The New Copperplate”

and that’s it! These five reels are not all that similar to each other, even though the same figure appears in all.

So, it seems that if our system has been copying its learning materials, it is not so easy to detect.

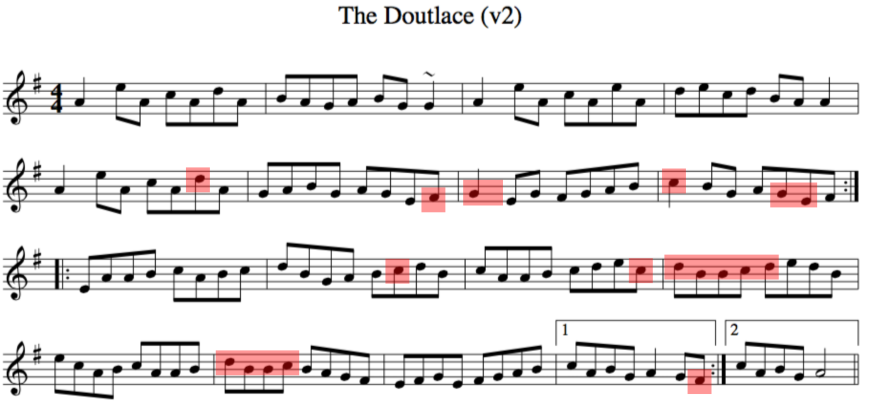

Still, there are a few ways I want to improve “The Doutlace,” in terms of sound, music, and play. Everything after the turn should be removed. The ending of the turn is good, but that of the tune is lacking. I change the third appearance of the figure in the tune such that the E is dropped to the D, which echoes its initial appearance. I also make G major more prominent in the last two measures of the tune. In the turn, I think the figure EAA and cAA appears too many times, so I vary its third and fifth appearance by raising the A notes a whole step to B. This clashes with the root (A) to create tension, and strengthens the downward resolution. The red boxes in the score below shows my changes, with a recording of its realisation:

T: The Doutlace (v2)

M: 4/4

L: 1/8

K: Ador

A2 eA cAdA|BAGA BG ~G2|A2 eA cAeA|decd BA A2|A2 eA cAdA|GABG AGEF|G2 EG FGAB| c2 BG AGEF:||:EAAB cABc|dBGA BcdB|cAAB cdec|dBBc dedB|ecAB cAAB|dBBc BAGF|EFGE FGAB|1 cABG A2 GF:|2 cABG A4||

All in all, just a few minor modifications to the output, and we now have a tune ready for the session. Now: can I put my name on it as the composer? Or have I merely edited the output of a nameless model? (Typical for folk music, the composer is lost to the sands of time.)

In the next parts, I will be taking a look at the “failures” of the model, and the opportunities they bring.

UPDATE: Here I am playing the piece.

Pingback: Deep learning for assisting the process of music composition (part 2) | High Noon GMT

Pingback: Deep learning for assisting the process of music composition (part 3) | High Noon GMT

Pingback: Deep learning for assisting the process of music composition (part 4) | High Noon GMT

Amazing work!

How do you check to make sure that the result is unique ? Do you have a database of songs and compare with each one, or how you do it ?

LikeLike

Thank you. That is very kind. For now, we are not checking everything for uniqueness. Initially, I was suspicious because the results were too good; but of those that I checked (in the data we culled from The Session, and without considering transpositions), they seem to be unique.

LikeLike

Around 2007 there was a burst of activity from folks developing computer programs to write Irish trad tunes based on the Session (and other) archives. I did one in Lisp that produced some decent output. It was discussed somewhere online, but I can’t remember where at this point. I don’t see it at The Session.

LikeLike

Ah, found an example of a tune my program wrote:

X: 1

T: The Mechanical Reel

K: D

|: “D”d2AB “G”dGG2 | “D”F2FG “D”E2FD | “D”d2AB “D”EDDA | “Bm”Bddf “G”BGGF :|

|: “D”FDFG “D”ABFA | “D”FDFG “G”BGAB | “D”FDFG “D”ABFA | “Bm”Bddf “G”BGGF :|

(Some boring chord progressions there!) I’d be interested to hear your assessment.

LikeLike

Interesting! Thanks for sharing. Here is your reel realised by our virtual musicians: http://www.eecs.qmul.ac.uk/~sturm/research/RNNIrishTrad/composition/TheMechanicalReel.mp3

It has a nice feeling to it. Each of the repeated phrases is half as long as they should be. Typically, the two parts are 8 measures each. Feeding into our model what your system has produced completes the lines:

X: 1

T: The Mechanical Reel

M: 4/4

L: 1/8

K: Dmaj

|: “D”d2AB “G”dGG2 | “D”F2FG “D”E2FD | “D”d2AB “D”EDDA | “Bm”Bddf “G”BGGF |

“D”A2AB “D”dgfe | “G”dBBA “A”FGAc | “G”B2Bd “A”cAFE |1 “D”D4 D2Ad :|2 “D”D2d2 d2cd ||

|: “D”FDFG “D”ABFA | “D”FDFG “G”BGAB | “D”FDFG “D”ABFA | “Bm”Bddf “G”BGGF |

“D”FFAF “D”DDFA | “G”BGBd “A7″cdec | “D”dfaf “A7″gec=A | “D”dfed “D”d4 :|

Here is the realisation: http://www.eecs.qmul.ac.uk/~sturm/research/RNNIrishTrad/composition/TheMechanicalReelv2.mp3

Not too successful, but at least it resolves! This is where I as a human would step in and fix things. :)

I will look for the 2007 activity. Thanks for the info!

LikeLike

Thanks for your analysis and running it through your system, the results are interesting!

One thing my system does is pattern its new compositions off of existing ones. In this case, the model composition was “Rolling in the Ryegrass” which (at least in its simple iconic version) has 4 bar parts. It was also trying in this tune to have the same “answer” in both halves of the tune and create the kind of tune that begs for a repeat (e.g., Out on the Ocean). (As you say, a real musician would write some kind of resolution for the last repeat!) Another thing you can notice in this tune is the sequence “FDFG ABFA | FDFG BGAB | FDFG ABFA” in the turn where the program has picked up on a motif in trad music of writing “A B | A C | A B” where B & C have some sort of musical relationship.

My program was specifically looking for some of those things. It’s interesting to see how much of that structure deep learning can pick up without any specific priming.

LikeLike

I see: https://thesession.org/tunes/87 That is a short tune and turn!

I think that would be quite useful: “Make me something that is like so and so, but different.” Movie music composers are brilliant at that. :)

LikeLike

This might interest you… http://blog.sitedaniel.com/2010/05/kookaburra/

LikeLike

Thanks for the link! I find the field of intellectual property fascinating. With the recent ruling against “Blurred Lines”, we need to create all possible “feels” separate from melodies.

LikeLike

Bob, Maybe music composition is something that is not deep learnable. As I understand it, deep learning is an approach that replicates some aspects of human cognition. But here’s the thing: human beings do not easily compose music, at least not good original music. Some people compose one good item of music in their lifetime, and never succeed in composing any more music that is as good as their first hit. Most people are very good at _judging_ the quality of music, and most people living in richer countries have been exposed to hundreds or thousands of items of high quality music over their lifetime. Yet most people have little idea about how to compose new music that is anything more than a weak variant of something they already know.

LikeLike

Thanks PJ. Before we can suggest a task is “deep learnable”, we would need to define that task first, and the optimisation problem!! :)

Here is a very good recent interview that nicely dispels with the notion that deep learning replicates aspects of human cognition: http://spectrum.ieee.org/robotics/artificial-intelligence/machinelearning-maestro-michael-jordan-on-the-delusions-of-big-data-and-other-huge-engineering-efforts

LikeLike

Pingback: Web Picks (week of 24 August 2015) | DataMiningApps

Pingback: Getting Started with Deep Learning | Walking in a Random World

Pingback: Using AutoHarp and a Character-Based RNN to Create MIDI Drum Loops | The Inquisitivists

Pingback: Componiendo música con ayuda de redes neuronales recurrentes

Wow, this is astonishing. I might embark on a similar project. Two questions: Does the RNN ever produce incorrectly formatted output (for example, bars in the wrong places)? And where does the percussion in the audio come from?

LikeLike

Thanks Leon. Yes, the RNN can produce “mistakes”, such as the wrong number of notes in a measure, missing repeats, etc. These mistakes also exist in the training data, introduced by humans. The percussion comes from a script I write, and is derived from the RNN output.

LikeLike

Pingback: Macchine che rubano il lavoro: siamo solo all'inizio! - Peekaboo